Designing applications to support rollback. When designing your application and making changes, there are techniques you can use that make the application more ready to support rollbacks. One option is to make sure your changes are always backwards compatible with the previous version. We might make schema changes in a way that didn't break the old application, for example, by using views instead. In the same case, it is common practice to prepare rollback scripts if the. TeamCity is a Java-based build management and continuous.

Welcome tois a subreddit dedicated to the DevOps movement where we discuss upcoming technologies, meetups, conferences and everything that brings us together to build the future of IT systemsWhat is DevOps?Rules and guidelinesBe excellent to each other!All articles will require a short submission statement of 3-5 sentences.Use the article title as the submission title. Do not editorialize the title or add your own commentary to the article title.No editorialized titles.No vendor spam. Buy an ad from reddit instead.Social & FunGeneral Information. I currently build ephemeral Linux web servers using a combination of ansible, packer, serverspec and AWS.

Upon the server being committed to source control the build system fires up packer (vagrant provisioner) with the ansible local provisioner and if tests pass stores the image in the AWS AMI store.This works great as the servers are created from scratch every time, fully documented (through source) and builds and image deployment are fully automated by the existing build system so no human error.I am wanting to implement our first windows server using a similar modern workflow. Unfortunately I am finding it difficult to find a clear stack that will allow me to work in a way that has been so successful on our Linux servers, for the windows side.So, my question to all the windows infra coders out there is, what tech stack are you running to build and manage windows server builds and changes and secondly what does your CI/CD workflow look like?Edit: wrote this right before going to bed and failed the title:-(. Basically doing the same thing but replace Ansible with just PowerShell provisioners in Packer to install any of the base packages (Splunk/Datadog/Octopus Deploy etc).

We're not doing it 100% immutable, Once Packer has created the image (which we do in TeamCity) it outputs the new AMI ID as an artifact which then feeds into all projects in TeamCity as an artifact dependency. The other projects are essentially Cloudformation stacks for different applications which take an AMI ID Parameter that is passed in from that artifact created by Packer. In turn, this calls all stacks to update (rolling update) with the new AMI.

Once the new instance comes online (1 by 1) it talks with Octopus (userdata/powershell) and registers itself with its correct deployment group/environment and also triggers an auto deploy of whatever application that specific server needs (based on tag data in Cloudformation), if an auto trigger is called and successful it tells Cloudformation to continue the rolling update, else rollback.Bit of a basic example of how I handle the Packer side of it for Windows (if it helps):. Thanks for this detailed answer very interesting.What do you do for tests at this layer or do you not test here and catch errors further downstream at that layers tests?As you are using packer directly without vagrant, what does your local development workflow look like when coding a new server? Does this get a bit clumsy?You said you are not doing it 100% immutably why is that?Also as you are not using a proper config management tool you are losing a role repository (IE ansible galaxy) have you come up with a solution for reusing roles?

(IE you update the dotnet core role powershell script you wouldn’t need to go and copy paste that code into every server?). No tests at the initial layer, because the AMI is simply shared with the testing AWS account first as a testing step. If there were to be an issue it would come clear after the AMI was shared with that account and applications were running (logging/monitoring/alerting are huge here). We wait a day after sharing to testing to promote into acceptance and then the day after into prod.

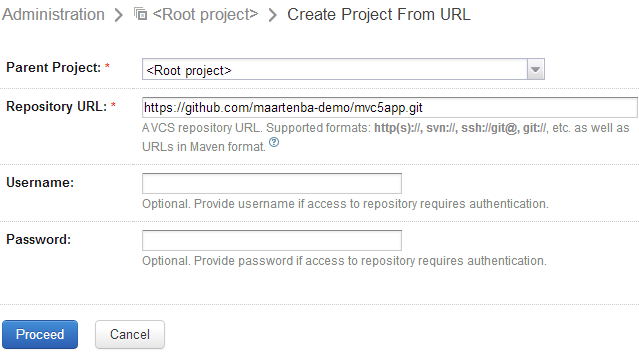

We use Packer in TeamCity, no need for Vagrant. TeamCity calls the AWS API's to create the EC2 instance then snaps it and shares the AMI with the proceeding AWS accounts as mentioned above.

100% immutability is not efficient in regards to software deployments. We do over 30 deployments to Prod a day (85 microservices). Rebuilding a new image for each deploy would slow down developer velocity by a ton. So everything is immutable expect the CD aspect which Octopus Handles. There is no need for Config Management in a sense. Every Cloudformation Stack has a set of tags that define its 'role', those tags are passed into an internal function (PowerShell) that runs during userdata to fill in the templates (Splunk/Datadog/Octopus) that were placed on disk during the Packer build.

If we're updating anything such as dotnet core version we would do that in Packer and ensure that its part of the base image. Its the 100% cattle mentality though, if you need to make a chance to your base image, make it and then push the image through the TAP environments (rolling updates happen). We work with developers to ensure they are building applications within a nice set of guidelines that means we avoid silly requests that fall outside our service catalog and thus requiring complex config management.